Ring Signatures (Part 1): Schnorr Identity Protocol

Interactive Zero-Knowledge Proof Schemes

Interactive Zero-Knowledge Proof Schemes

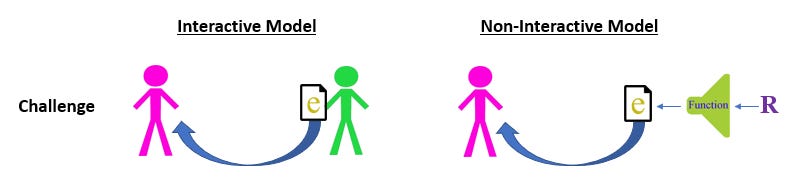

Before we get into ring signatures, we need to differentiate between interactive and noninteractive proofs of knowledge. Interactive proofs engage in a pre-processing round where the two parties (prover and verifier) exchange relevant information in order to construct a signature. The hand waving overview looks like this:

The prover has a keypair (x,P), where x is the private key and P is the public key. P is publicly broadcast, while x remains hidden to the prover alone.

When dealing with signatures, specifically of the Schnorr family, our commitment is constructed by picking a random nonce value r, then encrypting it to create R. Specifically, the encryption process works by performing R=r*G (mod n), where G is some specified elliptic curve generator point. The discreet logarithm assumption now makes it safe for us to give R out to the rest of the world, because trying to reverse engineer r given only R is computationally infeasible.

The challenge is some randomly selected scalar of the verifier’s choosing. We call this e. The verifier ships e back to the prover only after he is given R by the prover.

Finally, after receiving the challenge back from the verifier, the prover takes x, e and r and uses them as inputs to the signature function: s = r+e*x. This is the response.

At this point the verifier can check the validity of the response by feeding P, R, s, e (all of which are public parameters) as inputs into the verification algorithm. The algorithm works by first taking the signature value, then multiplying it by the generator point G. After calculating this sG value, the verifier then moves to the other side of the equation, and computes R + e*P. If both sides match, she knows that the prover does in fact know the discreet logarithm, aka they are in possession of the underlying private key x and authorized to sign the message.

Going forward, we will refer to the prover as Peggy (starts with a P), and the verifier as Victor. Even though Peggy uses r (plaintext nonce) and x (private key) in the computation of the signature, Victor will be incapable of recovering these variables independently. A good analogy is trying to recover the primary ingredients of a cake (eggs, milk, flour) once they been blended into batter. Victor only sees the encrypted values R and P (which Peggy includes publicly when broadcasting the transaction) and plugs them into the equation. However, he is mathematically convinced of authenticity of the signature, because the only person who could make this equation balance is someone that possessed the secret values x and r.

Now, if Peggy were to find out what e value Victor was going to pick in advance, then she could cheat the whole protocol and forge a [seemingly] valid signature WITHOUT knowing x. Here’s how:

1. Peggy picks a random s value, not really caring what the value is. This s value will simply be a facade in her ploy to trick the verifier.

2. Peggy knows that during the verification process, Victor will take the signature and multiply it by G to see if the equation balances (bottom left formula in above graphic). So, she takes her random s value in step #1 and multiplies it by G.

3. With sG locked in, Peggy now turns and computes R by using the bottom right formula. She is able to do this because a) e was leaked by Victor in advance b) P is the public key that everyone in the world can see c) sG was just computed in step 2.

4. Peggy ships off the values (R,s) to Victor for verification.

Poor sucker Victor will plug Peggy’s signature value into the verification algorithm, and it will return a TRUE response. Now, if Victor was seeing the r and x values going into the construction of s = r + x*e, he would be able to spot the forgery. Unfortunately, if we gave Victor these secret values, we’d lose the entire value proposition of the signature, i.e. Peggy not having to reveal to the rest of the world what her secret private key is.

Also notice in our above description that if Peggy doesn’t have access to e in advance, she can’t back out a malicious R and trick Victor. Why? Because in the Schnorr protocol, literally the first thing we require is that Peggy commit to a R value (by choosing a nonce r, then computing R=r*G) BEFORE she receives Victor’s e challenge. In the malicious equation R = sG - e*P, we are using e as an INPUT variable. It’s common sense that we cannot use something as an input which we do not yet know, outside of randomly guessing what Victor’s e value may be ahead of time and constructing R that way. But given the massive parameters being used, we can say that the chances of such an event occurring are so astronomically small that they are negligible.

So, we can say one of two things happened if the equation balances: either Peggy truly does know the secret key x, or Victor slipped Peggy knowledge of e ahead of time, allowing her to craft a signature. However, since Victor is the one who’s trying to figure out this question, he will know whether or not he colluded, meaning the only conclusion left is that Peggy indeed knows the private key, and created the signature honestly.

Non-interactive Variations

The frustrations with the scheme described above are twofold. First, it requires both parties to communicate back and forth before the proof can be constructed. This is especially problematic for blockchains where we need the prover to be able to append some information to the chain and immediately walk away. Not every party will be online at all times, some could lose connection midway through the exchange, some might be malicious etc. Reliance on external parties in a real-time scheme is impractical, thus we prefer an autonomous alternative.

Secondly, interactive schemes are not publicly verifiable; again, a huge impediment to a blockchain-based system. The interactive model only works when the two parties involved want to demonstrate proof among themselves. Remember, if Peggy is slipped knowledge of Victor’s e before the scheme, she can forge a valid signature without knowing the private key. But what if another validator comes along (call her Carol) and wants to audit Peggy’s signature herself? Carol wouldn’t be able to tell if there was collusion involved between Peggy and Victor in the last trial. The only way Carol can derive conviction is if she forces Peggy to perform the trial again, using a different e value of Carol’s choosing. Peggy would then have to replay this simulation over and over as new verifiers show up.

This is where non-interactive proofs come into play. Using a technique called the Fiat-Shamir heuristic, we can transform any interactive proof of knowledge into a non-interactive one, where we cut out the back-and-forth commitment/challenge phases and skip straight to signature construction, without requiring any interaction from the verifier’s end until validation. Additionally, we gain a second property of public verification, where Peggy can issue a single signature that will sufficiently satisfy any validator in question.

How is this accomplished? By “automating” away the verifier’s role in the challenge phase. In the interactive sequence described above, the only relevant operation performed by the verifier is providing a source of randomness for the e value. With the Fiat Shamir transformation, we can replace that role with a hash function and receive the same feature, but in a publicly auditable fashion.

This is viable because by definition, hash functions take in arbitrary length input values, and map them to fixed outputs that are indistinguishable from random. It’s impossible to reverse engineer the input, given the output alone. Another key component is that hash functions are deterministic, meaning the inputs to the hash function can be replayed over and over, and each time the output stays the same. For example, below is a SHA256 hash table on the following input variables. Verify the digests here.

The hash function provides us with a form of native time ordering. In order to compute the output of a hash H(X), we need to choose an X value before we can evaluate H(X); i.e. if I want to find e= H(5), I need to select “5” before I can solve for e. We can apply this concept to our protocol and gain some coveted properties.

In the non-interactive Schnorr protocol, instead of e being provided by verifier, we reassign e= H(R). Notice that in our new definition, we are guaranteeing that R is determined before the e value is chosen, as the hash of something cannot be evaluated prior to that something being known.

Security threats exist in the interactive Schnorr protocol which are not exploitable in the non-interactive model. We acknowledged earlier that Victor, being the one choosing the random e value, can interpret whether or not Peggy knows the discrete logarithm. But this is only true under perfect security assumptions. What if the piece of hardware Victor is using to generate the random nonce is being key logged by Peggy? Or what if the RNG Victor is using is not truly random, and Peggy is able to guess ahead of time what e value might get spit out in advance? If this was the case, Peggy would be capable of forging a signature without the private key. Victor would have no way of knowing that he’d been compromised and would interpret the invalid signature as valid.

We eliminate these social engineering attacks (assuming the hash function is secure) simply by removing the verifier altogether. Signature construction is isolated around Peggy alone, and Peggy cannot trick herself. Even if she decides on an R value and computes its hash well in advance of generating the signature, the very act of her committing to the R value in her head means that the time ordering has been set. There is literally no possible way Peggy can obtain e prior to R unless she picks some e value first, then goes back after the fact and randomly guesses its preimage, which violates our definition of a secure hash function.

The other benefit provided by this model is universal conviction to any validator, not just Victor. In our interactive example, the only person confident that Peggy possesses knowledge is Victor, because he is the one providing the randomness to e. An outside observer Carol cannot know that Peggy and Victor aren’t colluding. With this new scheme, any external validator can derive the same conviction as Victor, because cheating the protocol would require breaking the hash function, which is assumed to be impossible.

In Part 2 of this series, I will explore an interesting cryptographic technique called chameleon hashes, which sets the a foundation for ring signature construction. I’ll also perform a step-by-step walk through of AOS rings, the first (and most basic) iteration in the ring signature family.